In a previous post, Add Search to Hugo Sites With Azure Search, I explained how I added a search capability to my site using Azure Search. In this post, I’ll show you how I trigger Azure Search to reindex the site each time it’s redeployed as part of my existing GitHub Actions configuration.

As I explained in my post Automated Hugo Releases with GitHub Actions, I use GitHub Actions to deploy my site. What I wanted to do what to make sure that whenever the site was updated, Azure Search would reindex the site.

What I did was set up an additional job for the live site. Whenever a deployment succeeds, I want to not only tell Azure Search to reindex the site, but I also want to purge the search results page as well as the search index data file on my site from the CDN.

This involved two steps:

- Create a service principal (Microsoft Entra ID application) that will be used to make the changes in Azure

- Update the GitHub Actions workflow

Create Microsoft Entra ID service principal

As I said above, I want to do two things in Azure: purge the CDN and re-run the index. I wanted to do both of these things with the Azure CLI, but it’s support for Azure Search is quite limited at this time, so I was stuck using their REST API.

However, I can use the CLI for purging the CDN. I’d prefer to have an agent do this rather than a user, so I created a service principal that was granted permission to do this.

To do this, create a new Microsoft Entra ID application in your tenant from within the Azure portal. You don’t need to grant it any permissions, but you do need to create a client secret.

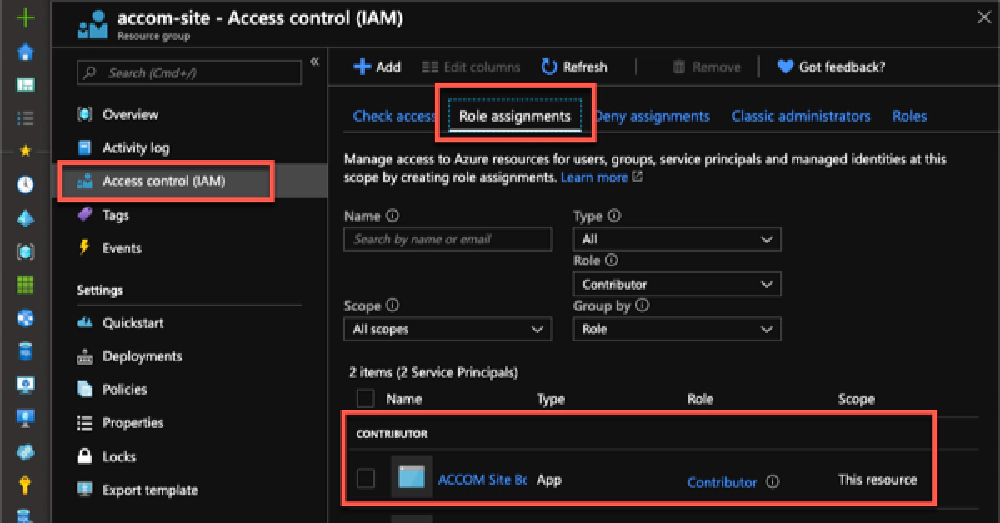

Once you have the app created, head over to your resource group or CDN, whichever level you want to give this app access to, and select the Access control (IAM) menu item:

Azure Resource Group IAM

Here I’ve granted the app the contributor role to the entire resource group. You can limit this further if you wish, for example I could have just granted this the role CDN Endpoint Contributor on the resource group or the actual CDN endpoint, but I use this for other things that are beyond the scope of this article.

With an app created, now I can update the pipeline.

Update Github workflow

Next, I had to add another secret to my GitHub workflow: AZURE_ACCOM_BOT_CLIENTSECRET. This contains the secret defined for my service principal above.

Now, head over to your workflow. I first updated my environment settings to include details for the new service principal and the Azure Search admin key:

env:

# <existing variables>

AZURE_ACCOM_BOT_CLIENTID: 63YoMama-2e26-W3rs-8Pnk-0fArmyB00ts1

# AZURE_ACCOM_BOT_CLIENTSECRET: <secret>

AZURE_ACCOM_BOT_TENANTID: 63YoMama-2e26-W3rs-8Pnk-0fArmyB00ts2

AZURE_CDN_ACCOM_PROFILE: cdn-acccom

AZURE_CDN_ACCOM_ENDPOINT: cdn-acccom

# AZURE_SEARCH_ACCOM_ADMINKEY: <secret>

The last step is to update the workflow by adding a new job after the existing build & deployment job. Here’s what it looks like:

######################################################################

# reindex search index

######################################################################

search-reindex:

name: Reindex Azure Cognitive Search index

needs: [build-deploy]

if: "github.ref == 'refs/heads/master' && !contains(github.event.head_commit.message,'[skip-ci]')"

runs-on: ubuntu-latest

steps:

######################################################################

# sign in to Azure CLI with service principal

######################################################################

- name: Login to Azure

run: az login --service-principal --tenant $AZURE_ACCOM_BOT_TENANTID

--username $AZURE_ACCOM_BOT_CLIENTID --password $AZURE_ACCOM_BOT_CLIENTSECRET

env:

AZURE_ACCOM_BOT_CLIENTID: ${{ env.AZURE_ACCOM_BOT_CLIENTID }}

AZURE_ACCOM_BOT_CLIENTSECRET: ${{ secrets.AZURE_ACCOM_BOT_CLIENTSECRET }}

AZURE_ACCOM_BOT_TENANTID: ${{ env.AZURE_ACCOM_BOT_TENANTID }}

######################################################################

# Purge search index & search page(s) from CDN

######################################################################

- name: Purge search assets from Azure CDN

continue-on-error: true

run: |

echo "ℹ️ Purging index data file: /<OMITTED>/<OMITTED>"

echo "ℹ️ Purging search page: /search"

az cdn endpoint purge --resource-group accom-site --profile-name

$AZURE_CDN_ACCOM_PROFILE --name $AZURE_CDN_ACCOM_ENDPOINT

--content-paths '/<OMITTED>/<OMITTED>' '/search' '/search/index.html'

echo "✅ Purged index data file: /<OMITTED>/<OMITTED>"

echo "✅ Purged search page: /search"

env:

AZURE_CDN_ACCOM_PROFILE: ${{ env.AZURE_CDN_ACCOM_PROFILE }}

AZURE_CDN_ACCOM_ENDPOINT: ${{ env.AZURE_CDN_ACCOM_ENDPOINT }}

######################################################################

# Use Azure REST API to invoke search indexer

######################################################################

- name: Reindex Azure Cognitive Search index

uses: andrewconnell/azure-search-index@1.0.1

with:

azure-search-instance: ${{ env.AZURE_SEARCH_ACCOM_INSTANCE }}

azure-search-indexer: ${{ env.AZURE_SEARCH_ACCOM_INDEXER }}

azure-search-admin-key: ${{ secrets.AZURE_SEARCH_ACCOM_ADMINKEY }}

Let me explain what this does:

- Create a new job: I first create a new job

search-reindexthat depends on the stagebuild-deploy. This is done as I only want to reindex the site when the deployment succeeds. In this set up I also add a condition so that this only runs when a build is triggered on themasterbranch. Technically, this isn’t necessary as thebuild-deployonly runs on master as well which would control it. - Step: Login to Azure CLI: The first step in this job is to sign in to the Azure CLI. This is where I’m using my service principal that I created. By injecting the IDs and client secrets in as environment variables, they won’t get written to the pipeline execution or diagnostic logs.

- Step: Purge CDN: I’m using an Azure CDN on my site for optimal performance. When the site gets updated, I want to make sure the search page is purged from the CDN as well as that JSON file that Azure Search uses to index the site.

- Step: Reindex the site: The last step is to reindex the site. As I said above, the Azure CLI doesn’t support this at the time of writing, so I’m using their management REST API. To simplify things, I wrapped this step up in a custom GitHub Action that I’ve published to the GitHub marketplace for others to use: GitHub Marketplace: Azure Cognitive Search Reindex

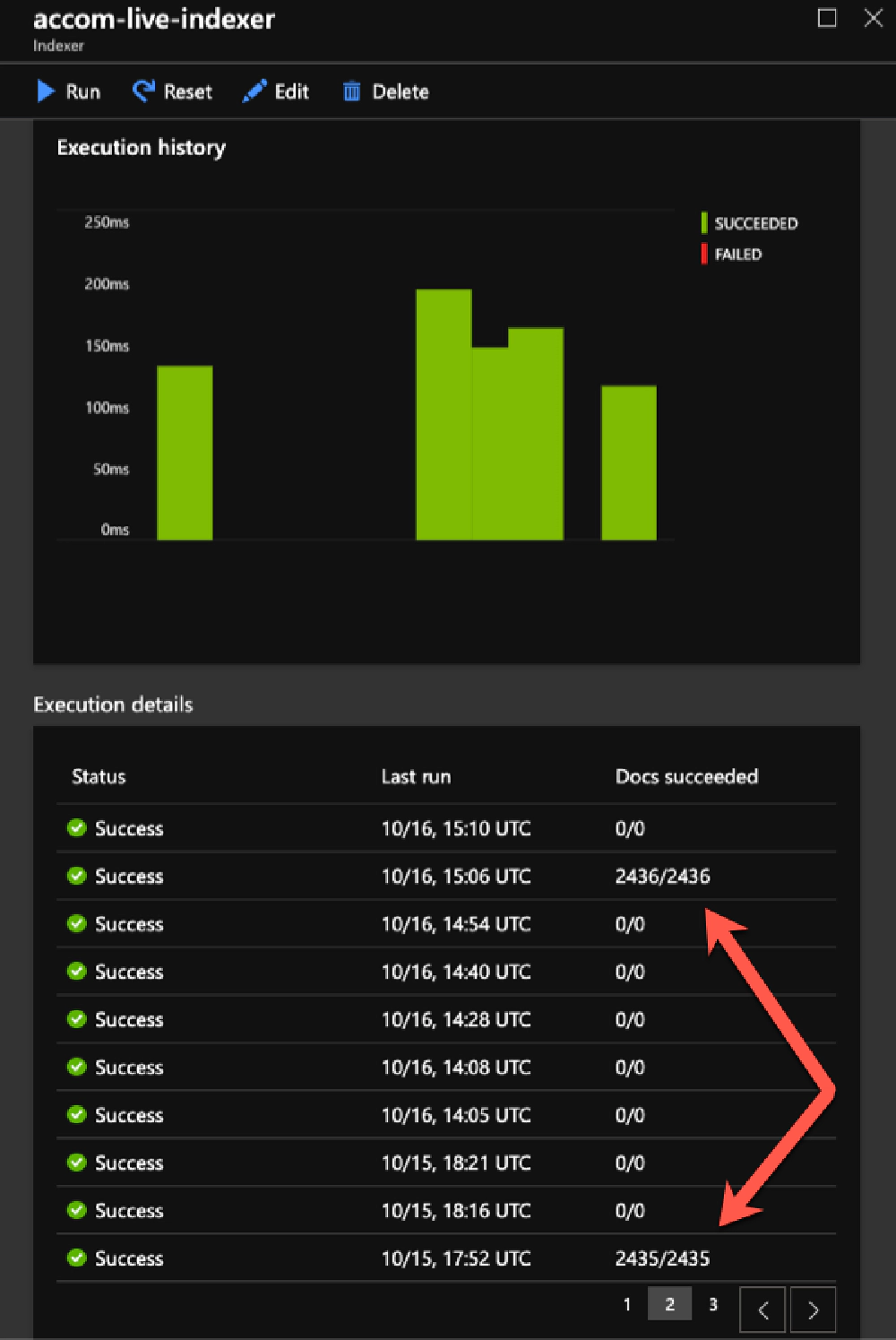

It may seem a bit strange when yo look at your indexer results as it shows 0/0 docs were indexed, but that’s only when the indexer doesn’t see a change to the file. When you actually add content to the site, it will pickup those files.

Azure Indexer Results

For instance, with my Github Actions set up, I automatically rebuild & deploy the site a few times a day picking up any content that I wrote that I wanted published at a later time. If nothing changed, then there was nothing new to get indexed. But as you can see from the indexer history above, When I added one new blog post, it picked it up.